Translate this page into:

Construct validity and predictive utility of internal assessment in undergraduate medical education

2 Department of Physiology, Christian Medical College and Hospital, Ludhiana 141008, Punjab, India

3 Department of Paediatrics, Christian Medical College and Hospital, Ludhiana 141008, Punjab, India

Corresponding Author:

Dinesh K Badyal

Department of Pharmacology, Christian Medical College and Hospital, Ludhiana 141008, Punjab

India

dineshbadyal@gmail.com

| How to cite this article: Badyal DK, Singh S, Singh T. Construct validity and predictive utility of internal assessment in undergraduate medical education. Natl Med J India 2017;30:151-154 |

Abstract

Background. Internal assessment is a partial requirement of all medical college examinations in India. It can help teachers provide remedial action and guide learning. But its utility and acceptability is doubted because, with no external control, internal assessment is considered prone to misuse. It is therefore not used as a tool for learning. There is no study on the validity of internal assessment from India.Methods. We use multiple methods and multiple teachers to assess students and our records are well maintained. We analysed the internal assessment scores at our institute. We correlated the internal assessment marks with the university marks obtained by students in one of the subjects in each of the four professional examinations.

Results. There was a positive correlation of university marks with internal assessment marks. The r values ranged from +0.426 to +0.685 and were statistically significant (p<0.01). The percentage of internal assessment marks was higher than the university percentage in all professional examinations except the first.

Conclusions. Internal assessment marks correlate well with marks in university examinations. This provides evidence for construct validity and predictive utility of internal assessment. Internal assessment can predict performance at summative examinations and allow remedial action.

Introduction

In India, student assessment in the undergraduate medical curriculum consists of internal assessment (IA) and summative assessment. The summative assessment, i.e. university examinations at the end of professionals, is used for pass or fail decisions. IA is conducted by teachers who have taught the students.[1] It overcomes the limitations of day-to-day variability and allows larger sampling of topics, competencies and skills. In 1997, the Medical Council of India (MCI) made IA mandatory for assessment of undergraduate medical students. Weightage for the IA is 20% of the total marks in each subject. Student must secure at least 35% marks of the total marks fixed for IA in a particular subject in order to be eligible to appear in the final university examination of that subject. For example, pharmacology in the second professional has a total of 150 university marks. The division includes a theory part of 110 marks (40 theory paper A + 40 theory paper B + 15 theory viva + 15 IA) and a practical part of 40 marks (25 practicals + 15 IA).[2]

As per MCI regulations, IA should be based on day-to-day assessment, e.g. assessment of student assignments, preparation for seminars, clinical case presentations, etc.[2] However, we are not sure that these guidelines are followed in all medical colleges. The marks of IA are used as a passport to appear in the university examination, rather than being used as a tool to improve learning by providing feedback. Hence, IA is not being respected by teachers and students as a tool that can be relied upon confidently.[3],[4],[5] It is also considered that various components of IA are subjective in nature, making it untrustworthy.[6] This brings in the concept of predictive utility and construct validity of IA. Predictive validity is a subtype of criterion-related validity, where the criterion is a future test, i.e. university summative examination. If good/poor IA performance score predicts better/poor summative scores, respectively, then it indicates that IA has good predictive utility. Our system of using IA throughout the year followed by summative university examination is a good model to evaluate predictive utility of IA.[7] In medical education, most concepts are constructs. A construct is a combination of inputs/evidences, e.g. content-related evidence, criteria-related evidence, reliability and other related evidences that contribute to validity. The use of multiple methods including subjective and objective methods, blueprinting, multiple teachers and day-to-day assessment provides construct-related evidence for validity of IA.[7],[8]

Although MCI regulations have guidelines for IA, there has been no study on the utility or validity of this mode of examination. IA being a useful component, there is a need to study the predictive utility of IA and find ways to improve it. Hence, to examine the construct validity and predictive utility of IA, we compared the IA marks with university marks in the MBBS course at our institute.

Methods

Our study included marks of four MBBS professional examinations, i.e. first, second, final part-I and final part-II professional. The number of students in each batch was 50. Of the 200 students, the records of 164 students were complete and were included in the study. The IA marks of students from one arbitrarily selected subject each from all the four professionals were collected. Similarly, the total university marks in these subjects were also collected from the records. To maintain anonymity, the subject is not being identified.

Process of IA

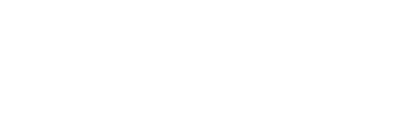

Our institute has designed a system of IA, which is in use since 1997.[5],[9] This takes into consideration theory tests, practical tests, seminar preparation and presentation, case presentations, research, quiz participation, subjective assessment and punctuality [Figure - 1]. Multiple methods of assessment are used and all teachers of the subject are involved. The records are updated and students are provided regular feedback. The IA marks are calculated as per formula given by our affiliating university. The formula has the following components:

|

| Figure 1: Internal assessment record sheet |

A (80% [academic marks]) + B (10% [subjective assessment]) + C (attendance ≥90%)

For example, the pharmacology IA of 15 marks is composed of A (12 marks) + B (1.5 marks) + C (1.5 marks) given separately in each theory and practical.

The calculated IA is shown to students regularly or on demand throughout the course. Based on the marks in the IA, students are provided feedback to improve their performance in specific areas. Students are asked to regularly sign on their IA sheets. The Principal's office is informed every 6 months about students whose IA is low. Students are provided remedial instruction. Sometimes parental intervention is also included.

Statistical analysis

The total IA marks were deducted from the university marks of a particular subject to obtain the marks scored by a student in the university examination. We labelled these marks as X marks for calculations. The IA marks and X marks in the subjects are depicted in actual marks and percentages. The Pearson correlation between total IA marks and X marks was calculated. The r value calculated by correlation coefficient is a linear correlation coefficient and can be used to assess if there is a linear relationship between two variables.[10]

Results

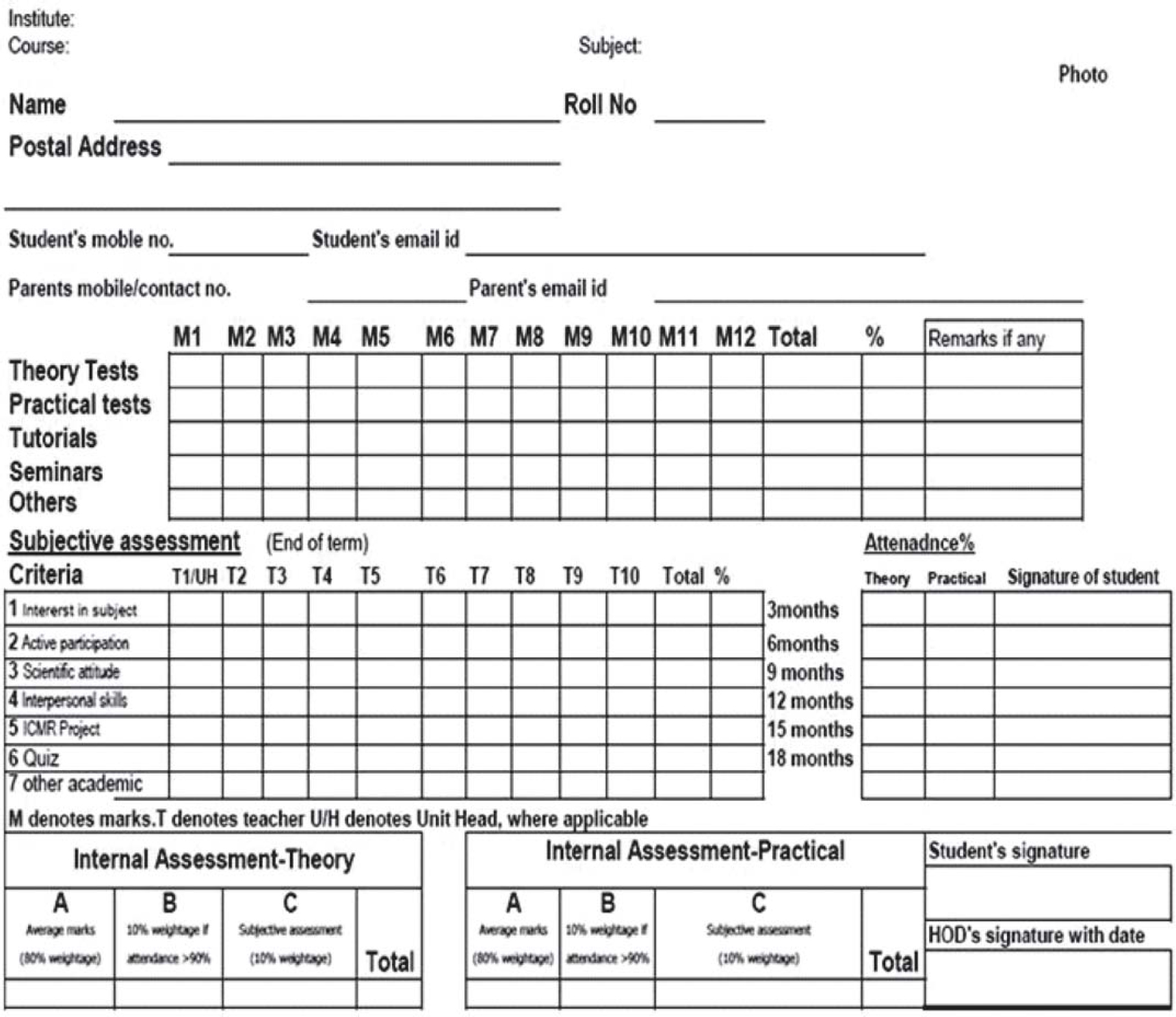

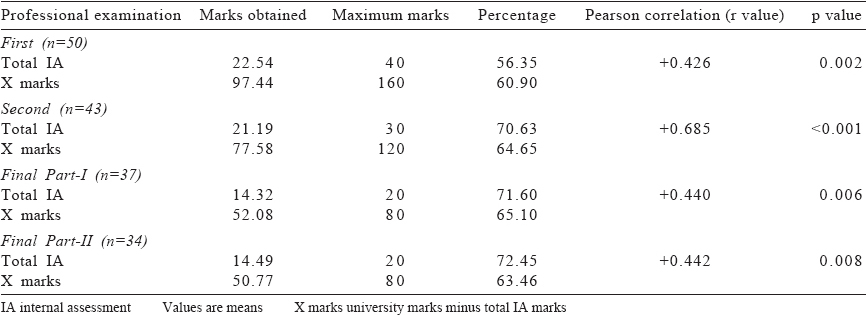

The marks of the 164 students from four professional years and the X marks are shown in [Table - 1]. The IA marks show a positive correlation with X marks, which is statistically significant (p<0.01) in all professional examinations. The value of correlation varies from +0.426 to +0.685. The scatter diagram [Figure - 2] shows that the IA marks are positively correlated to better university marks in all professionals.

|

| Figure 2: The correlation between X marks and total internal assessment (IA) in one subject of first (2a), second (2b), final-I (2c) and final-II (2d) professionals X marks: Total university marks minus total internal assessment marks in one subject of professional |

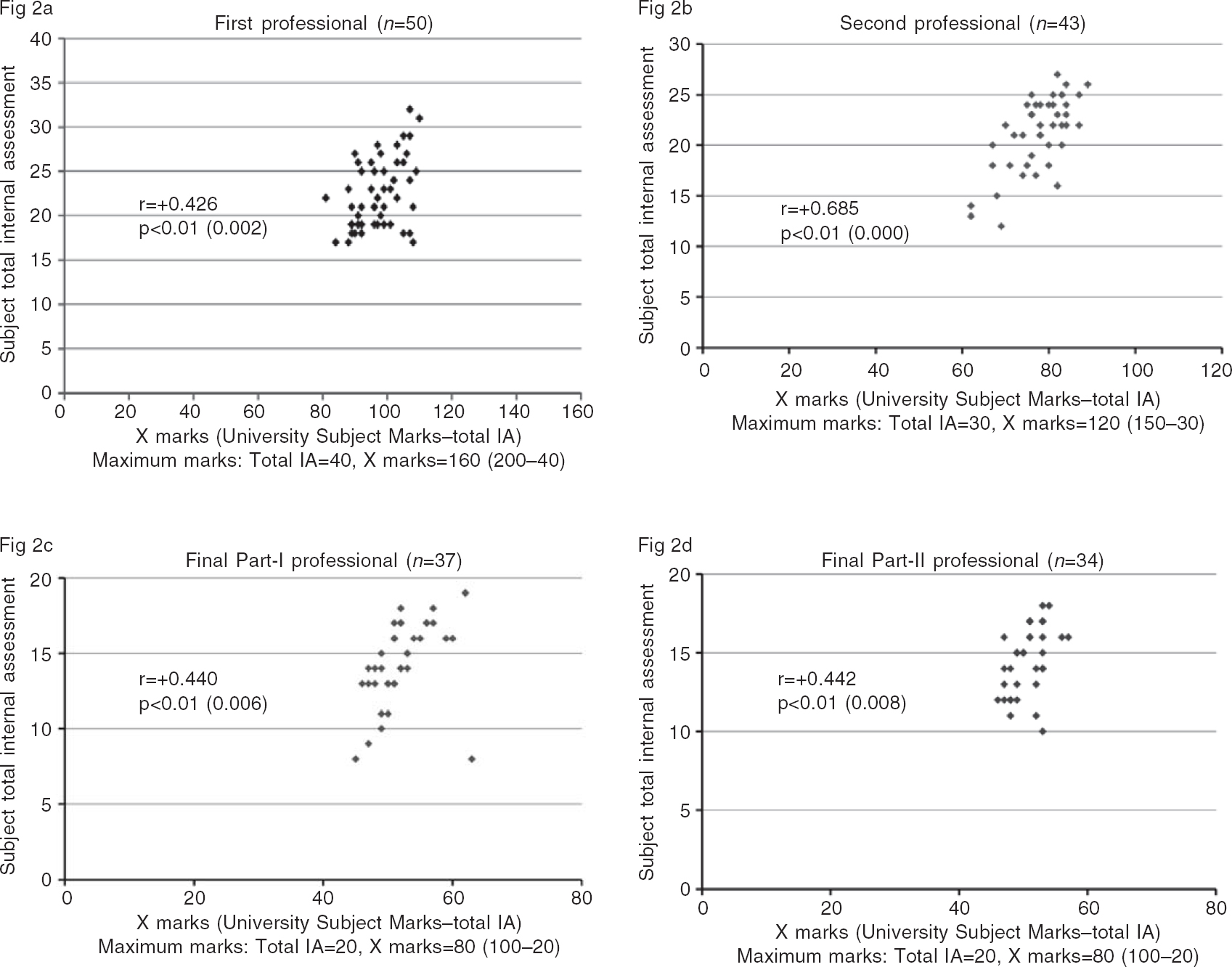

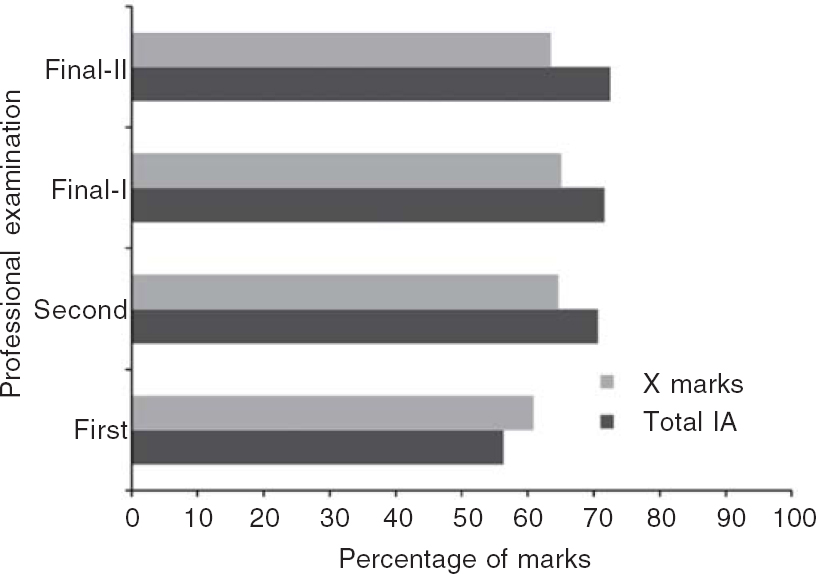

A comparison of the percentage of marks of IA and X shows that in the first professional the X marks (summative assessment) are higher than IA while in other professionals IA marks are lower than X marks [Figure - 3]. However, the difference is not statistically significant in all professionals.

|

| Figure 3: Comparison of percentage marks of internal assessment (IA) and university examination in one subject of various professionals X marks: Total university marks minus total internal assessment marks in one subject of professional |

Discussion

Our results show that there is a positive linear relationship between IA and university marks indicating that better marks in IA are related to better marks in the university examination. This can also be interpreted as ‘if a student is performing well throughout the year, he/she is likely to score good marks in summative assessment’. This indicates the predictive utility of IA, i.e. better marks in IA predict better marks in summative examinations. This also provides construct validity evidence for IA, in addition to suggesting its predictive utility, as predictive-related evidence contributes to the construct.

The converse is also true for IA marks. If the IA marks are low, it predicts low marks in the summative assessment. This also signifies that IA marks can be useful for providing feedback to students and teachers. Thus, IA identifies areas that should be targeted for improvement for students who have low IA marks.

The comparison of IA and university marks shows that in the first professional, university marks are higher than IA, while in other professionals it is the opposite, i.e. IA marks are higher than university marks. This is in line with previous reports that IA marks tend to be inflated.[4],[10] However, despite this variation, there is a positive correlation.

One of the criticisms of IA is its subjective nature; however, there is enough evidence in literature to suggest that subjective assessments can be as reliable as highly objective ones.[11],[12],[13] Our IA module uses multiple methods and multiple teachers in calculating IA, which could have contributed to better future performance. The use of multiple methods and multiple assessors improves content-related evidence for validity and also reliability. Both these evidences contribute to construct validity. These results indicate that a well-designed IA can have good predictive value and construct validity. We could not find any other study on the correlation of IA marks with university marks to substantiate the above assumption.

To conclude, despite its limitations of subjectivity and inflated marks, IA has construct validity and predictive utility. It can be used to provide corrective feedback to students to improve their learning.

| 1. | Singh T, Gupta P, Singh D. Continuous internal assessment. In: Singh T, Gupta P, Singh D (eds). Principles of medical education. 3rd ed. New Delhi:Jaypee Brothers Medical Publishers; 2009:10–12. [Google Scholar] |

| 2. | Medical Council of India. Regulations on graduate medical education, 1997 (amended up to 2012 February). Available at www.mciindia.org/tools/announcement/Revised_ GME_2012.pdf (accessed 15 Feb 2016). [Google Scholar] |

| 3. | Singh T, Anshu, Nath J. The quarter model: A proposed approach for in-training assessment of undergraduate students in Indian medical schools. Indian Pediatr 2012;49:871–6. [Google Scholar] |

| 4. | Gitanjali B. Academic dishonesty in Indian medical colleges. J Postgrad Med 2004;50:281–4. [Google Scholar] |

| 5. | Singh T, Anshu. Internal assessment revisited. Natl Med J India 2009;22:82^·. [Google Scholar] |

| 6. | Tongia SK. MCI internal assessment system in undergraduate medical education. Natl Med J India 2010;23:46–7. [Google Scholar] |

| 7. | Downing SM. Validity: On meaningful interpretation of assessment data. Med Educ 2003;37:830–7. [Google Scholar] |

| 8. | Downing SM. Reliability: On the reproducibility of assessment data. Med Educ 2004;38:1006–12. [Google Scholar] |

| 9. | Singh T, Singh D, Natu MV. A suggested model for internal assessment as per MCI guidelines on graduate medical education, 1997. Medical Council of India. Indian Pediatr 1997;35:345–7. [Google Scholar] |

| 10. | Mukaka MM. Statistics corner: A guide to appropriate use of correlation coefficient in medical research. Malawi Med J 2012;24:69–71. [Google Scholar] |

| 11. | Ananthakrishnan N, Shanthi AK. Attempts at regulation of medical education by the MCI: Issues of unethical and dubious practices for compliance by medical colleges and some possible solutions. Indian J Med Ethics 2012;9:37–42. [Google Scholar] |

| 12. | Van der Vleuten CPM, Norman GR, De Graff E. Pitfalls in the pursuit of objectivity: Issues of reliability. Med Educ 1991;25:110–18. [Google Scholar] |

| 13. | Singh T. Student assessment: Issues and dilemmas regarding objectivity. Natl Med J India 2012;25:287–90. [Google Scholar] |

Fulltext Views

1,475

PDF downloads

339